Why I'm Writing This

I've been building with serverless since 2021.

Not just tinkering — using it as the primary architectural choice for production systems, advocating for it in hiring conversations, writing about it, giving talks about it, and making it the backbone of my graduate thesis on cloud-native architecture.

And yet, the question I get asked most often isn't about DynamoDB access patterns or cold start optimization. It's this:

"How do I actually know when I've done it right?"

That question has a longer answer than most people expect. That's what this blog is for.

The gap nobody warns you about

There's a very seductive version of serverless that gets sold in conference talks and documentation pages. Deploy a function. It scales. You pay nothing when it's idle. Zero infrastructure to manage.

All of that is true. None of it prepares you for production.

The real learning curve in serverless isn't writing functions — it's understanding the execution model well enough to make good decisions under pressure. Why does your function behave differently under concurrent load? Why is that DynamoDB error only happening in production? Why did your SQS queue suddenly back up overnight with no error rate spike?

The answers to these questions all trace back to the same place: how Lambda actually works, and how the services around it actually behave. Not in theory. In practice.

What "practical" means here

I'm not going to write tutorials that walk you through creating an S3 bucket. There are plenty of those. What I want to write — and what I wish had existed when I was figuring this out — is the thinking behind the decisions.

Why you should treat the handler as an entry point and nothing more.

Why idempotency isn't optional the moment you introduce asynchronous processing.

Why that IAM wildcard that "works fine" is a problem you haven't encountered yet.

Why your local environment is an approximation, and which differences will actually matter.

Each post here is going to take a concept that looks simple from the outside — and show you what it actually looks like from the inside of a running production system.

Where this comes from

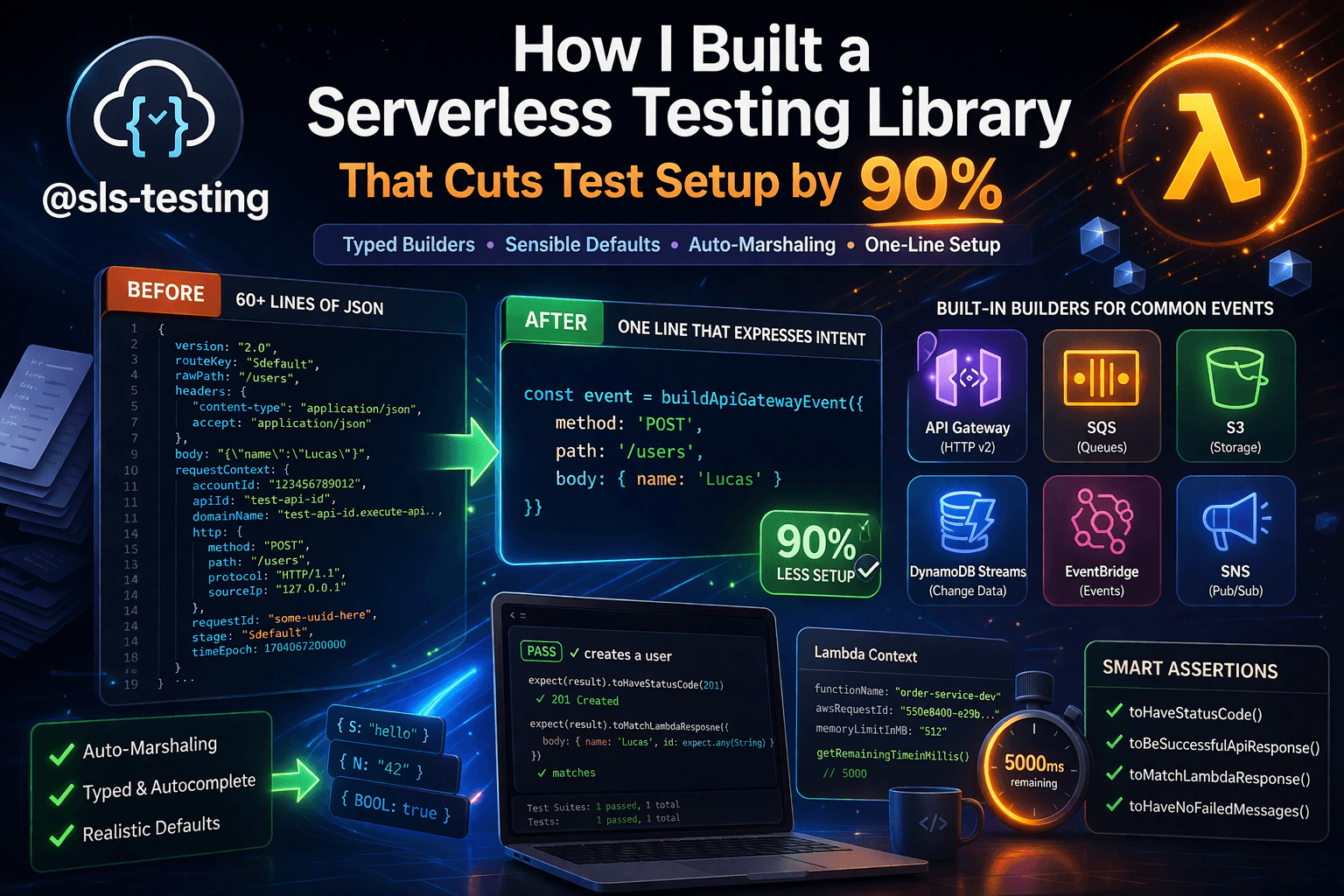

My day job is backend engineering on a growth team at a SaaS company. We run a serverless-first stack on AWS: Lambda, DynamoDB, SQS, EventBridge, API Gateway. I've shipped billing systems, built MCP-powered tooling, modernized test infrastructure, and handled zero-downtime schema migrations — all within this architecture.

I've also made most of the mistakes worth making. Misconfigured IAM roles that only failed at runtime. A trigger loop I caught in staging, barely. An SQS processor that quietly stopped processing because I hadn't understood partial batch failures. An observability gap that turned a 20-minute incident into a 3-hour one.

That's not a credentials flex. It's context. The patterns I write about here have been tested in the only environment that really matters.

What's coming

I'll publish roughly twice a month. No rigid structure — just whatever's most worth writing about. Some posts will be conceptual, building the mental models that underpin everything else. Some will be deeply technical: specific patterns, concrete code, tradeoffs spelled out in full.

A few topics already in the pipeline:

The execution environment, actually explained — what init, invoke, and shutdown mean for the code you write every day

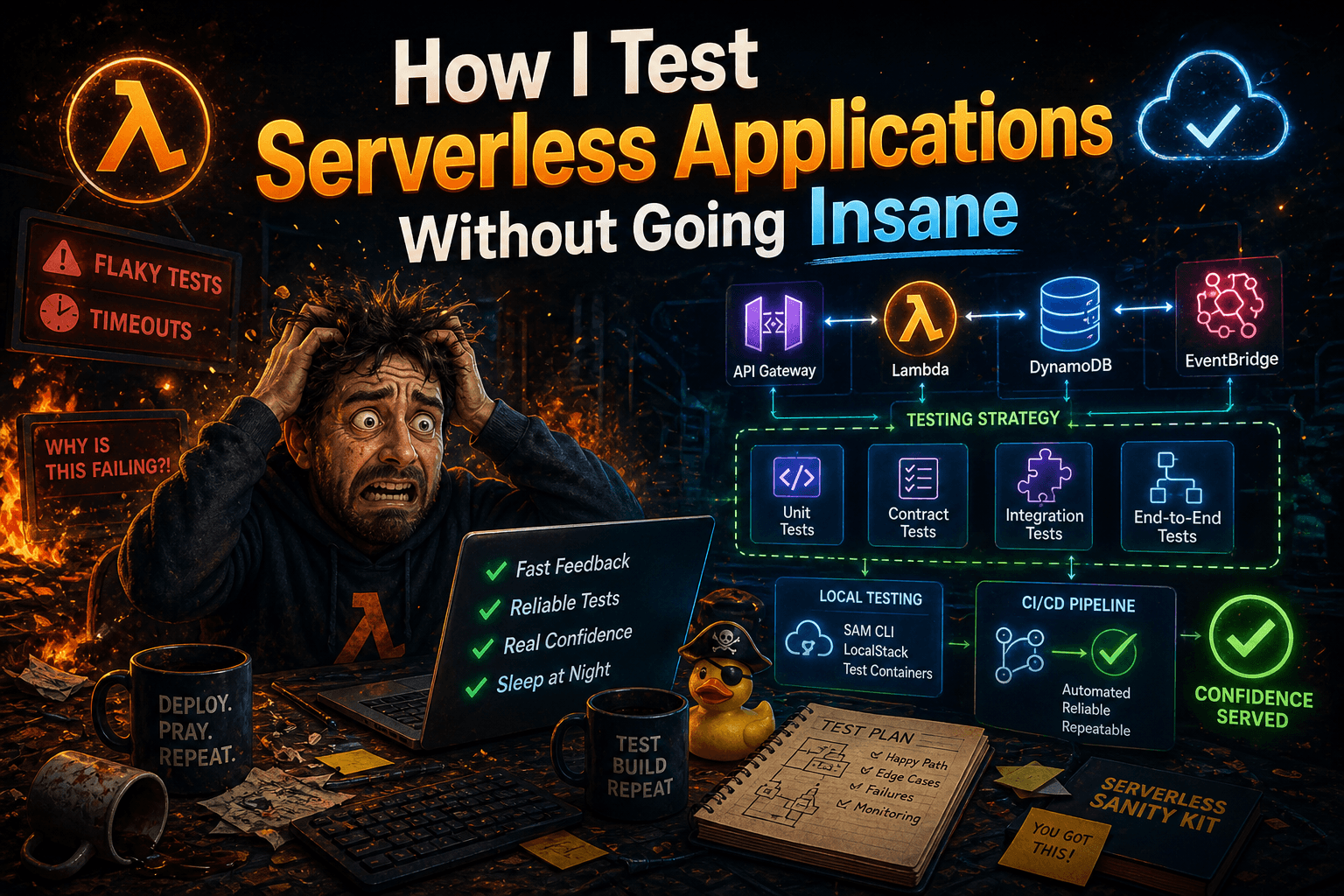

Why your tests pass and production still breaks — the serverless testing gap and how to close it

IAM for people who don't want to read the entire IAM docs — least privilege, per-function roles, and the wildcards that will eventually hurt you

Idempotency from scratch — because "process it once" is harder than it sounds when Lambda will retry anything that fails

If there's something specific you've been struggling with, I want to hear it. The goal of this blog is to be useful — not to document what I already know, but to address the questions you're actually asking.

One more thing.

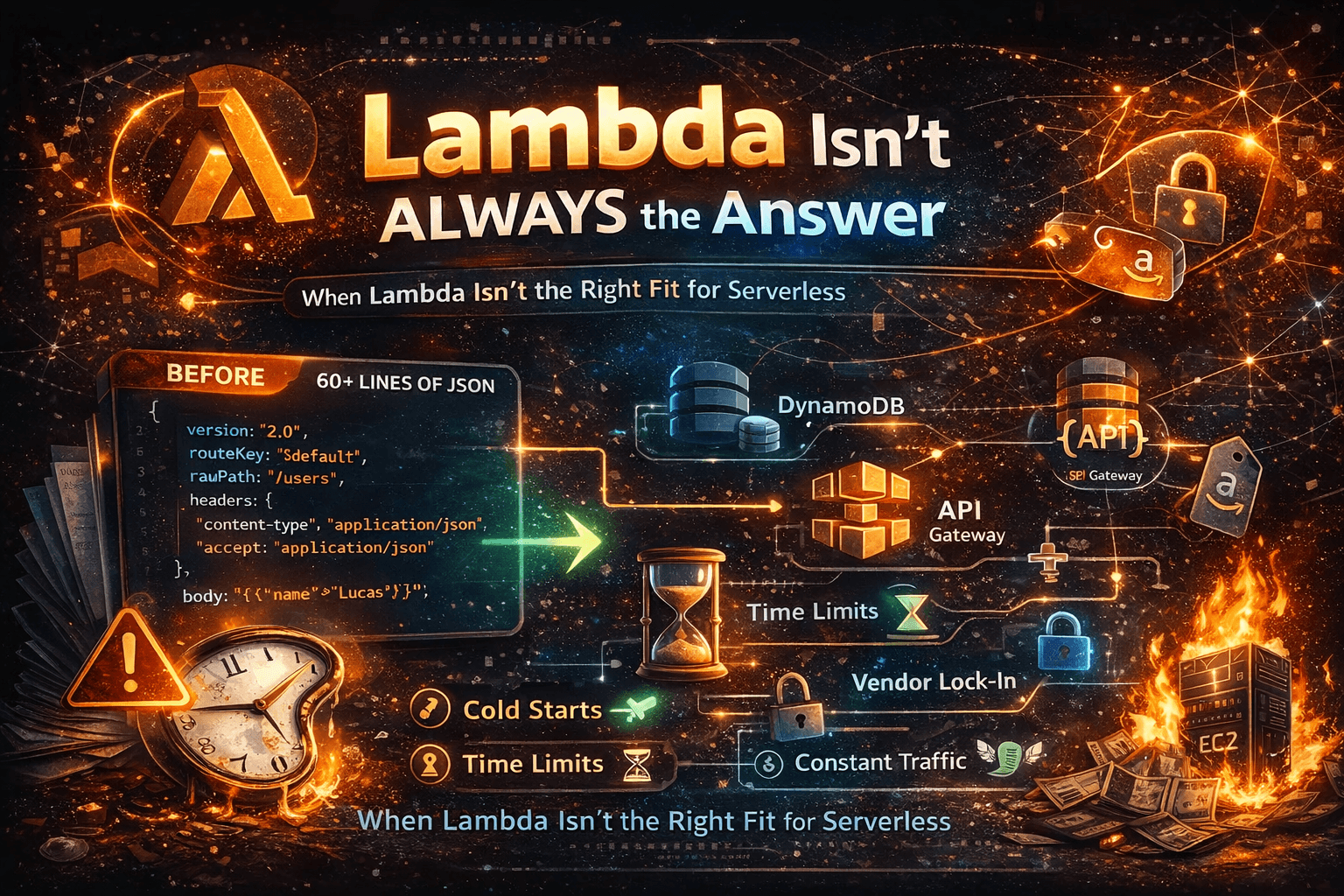

Serverless isn't perfect. It's not always the right choice. I'll say so when it isn't. The best thing I can offer here isn't enthusiasm — it's honesty about where the edges are and what happens when you hit them.

Let's get into it.

Lucas Brogni is a Senior Software Engineer with 10+ years of experience building distributed systems.