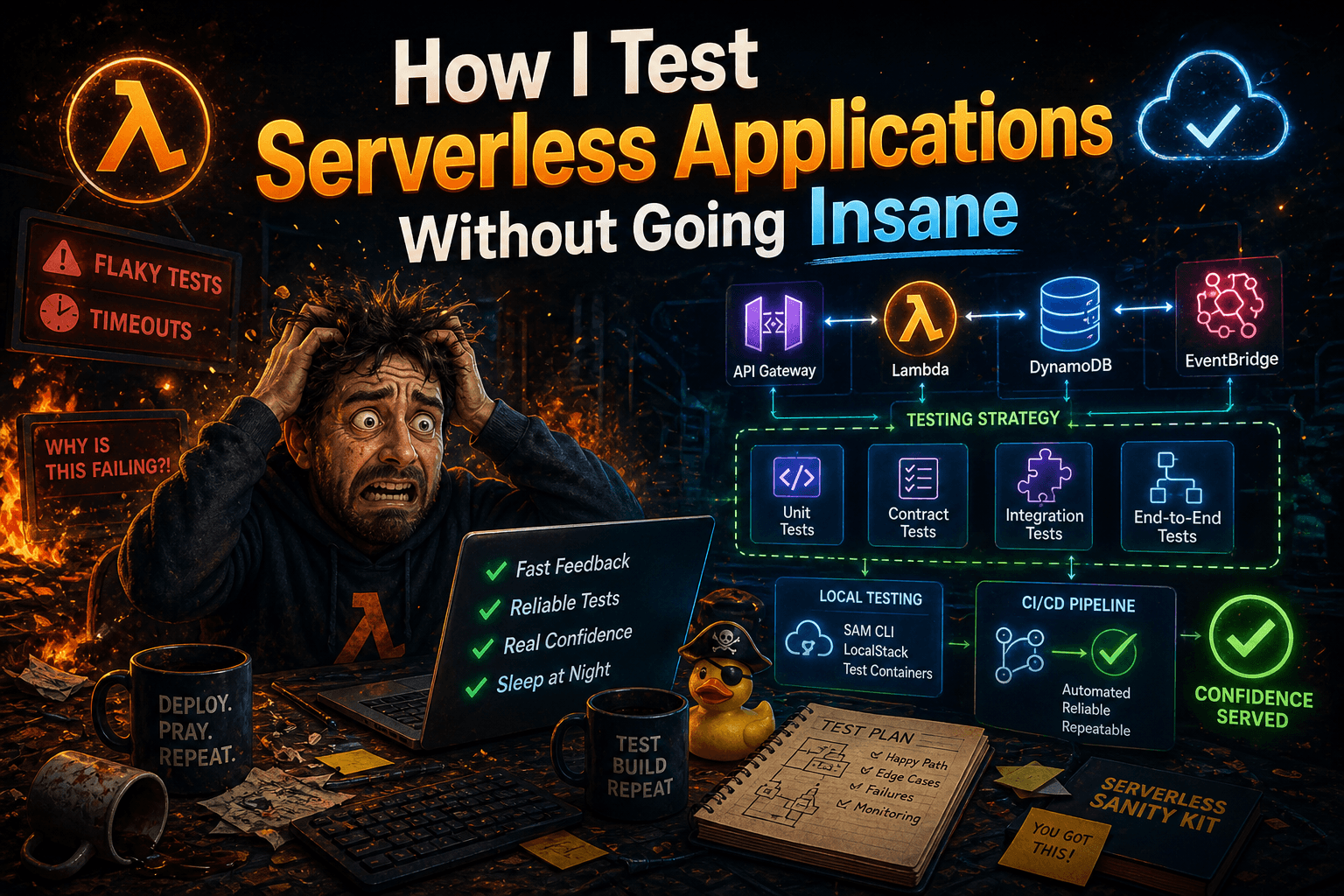

How I Test Serverless Applications Without Going Insane

Testing serverless isn't hard. Testing serverless well is a different story.

After years of building Lambda-based systems, I've seen the full spectrum: no tests at all, tests that mock everything and catch nothing, and test suites so slow they're never run in CI. I've made all those mistakes myself.

This post discusses the approach I find most effective. It's not about comparing frameworks or libraries; rather, it's the setup I consider best for incorporating tests into a serverless application.

The Problem With "Just Test Your Functions"

The most common advice you'll hear is "Lambda functions are just functions — they're easy to test." And that's technically true. You can, and honestly should, add unit tests for each lambda in isolation.

On the other hand, though, the problem is that most of the interesting bugs in serverless systems don't live inside the function logic. They live at the edges:

The IAM permission that works locally but not in prod

The SQS message that's shaped differently than you expected

The DynamoDB expression that silently returns nothing instead of erroring

The S3 event notification that fires but your handler throws before processing it

The Lambda is in a loop because the file is being pushed and read from the same S3

If your entire test strategy is mocking aws-sdk and asserting on return values, you're absolutely missing out on potential bugs.

The Three-Layer Model I Actually Use

I think about serverless testing in three layers, and I'm deliberate about what each one covers.

Layer 1: Unit Tests — Test the Logic, Not the Infrastructure

Unit tests should be fast, isolated, and abundant. But "isolated" doesn't mean "mock everything."

Here's the distinction I make: I don't mock my dependencies. I replace them with Fakes.

A mock is a hollow stub — you tell it what to return, it returns exactly that, and it never pushes back. A Fake is a real, working implementation of the same interface, just without the infrastructure. It has actual logic: it stores items in memory, enforces constraints, and throws an error on invalid input. It behaves like the real thing, just without DynamoDB behind it.

// The interface both the real implementation and the Fake satisfy

interface UserRepository {

save(user: User): Promise<void>;

findById(id: string): Promise<User | null>;

}

// The Fake — real behavior, no infrastructure

class InMemoryUserRepository implements UserRepository {

private store = new Map<string, User>();

async save(user: User): Promise<void> {

if (!user.id) throw new Error('User must have an id');

this.store.set(user.id, user);

}

async findById(id: string): Promise<User | null> {

return this.store.get(id) ?? null;

}

}

Now your unit tests use InMemoryUserRepository and they actually mean something. If your handler calls findById with the wrong ID, the Fake returns null — just like DynamoDB would. You're testing real behavior, not scripted responses.

describe('processOrder', () => {

it('should reject orders with no items', async () => {

const repo = new InMemoryUserRepository();

const result = await processOrder({ items: [] }, repo);

expect(result.success).toBe(false);

expect(result.error).toBe('ORDER_EMPTY');

});

it('should not persist an order that fails validation', async () => {

const repo = new InMemoryUserRepository();

await processOrder({ items: [] }, repo);

// The Fake lets us assert on actual state, not on whether a method was called

expect(await repo.findById('order-123')).toBeNull();

});

});

Fast. Deterministic. And honest about what your code actually does.

The payoff of Fakes comes later: you can run contract tests that exercise both the Fake and the real implementation against the same suite. If DynamoUserRepository and InMemoryUserRepository both pass the same contract tests, you know the Fake is trustworthy — and your unit tests are as meaningful as your integration tests.

// The contract — runs against both implementations

function userRepositoryContract(makeRepo: () => UserRepository) {

it('should persist and retrieve a user', async () => {

const repo = makeRepo();

await repo.save({ id: 'u1', email: 'test@example.com' });

expect(await repo.findById('u1')).toMatchObject({ id: 'u1' });

});

it('should return null for unknown IDs', async () => {

const repo = makeRepo();

expect(await repo.findById('nonexistent')).toBeNull();

});

}

// Run the contract against both

describe('InMemoryUserRepository', () => userRepositoryContract(() => new InMemoryUserRepository()));

describe('DynamoUserRepository', () => userRepositoryContract(() => new DynamoUserRepository(client, 'users-test')));

This pattern does require more upfront design — your dependencies need to be behind interfaces, and you need to maintain the Fakes. That's not free. But it pays back in test confidence and, honestly, in better code architecture. If something is hard to Fake, it's usually a sign that the dependency boundary is wrong.

What I cover at this layer:

Business logic and domain rules

Input validation and error handling

Event parsing and transformation

Edge cases and branching paths

What I don't bother covering here:

Whether the DynamoDB call actually works

Whether my IAM role has the right permissions

Whether the Lambda timeout is long enough

Layer 2: Integration Tests — Test the Real AWS Interactions

This is where most projects fall apart. Developers either skip integration tests entirely (dangerous) or try to run them against real AWS (slow and expensive).

My approach: run integration tests against a local AWS emulator during development and CI, and run a smaller set against a real staging environment before merging to main.

For local emulation, I've been working on tooling around this exact problem — more on that in a future post. For now, the important thing is the principle: your integration tests should exercise actual AWS API calls, not mocked ones.

What I cover at this layer:

DynamoDB reads and writes (real expressions, real shapes)

SQS send and receive (including batch behavior)

S3 put/get/delete

EventBridge publish and filtering rules

Any service-to-service interaction within your system

// Example: Real DynamoDB interaction, real data shape

describe('UserRepository', () => {

it('should persist and retrieve a user by ID', async () => {

const repo = new UserRepository(dynamoClient, 'users-test');

const user = { id: 'user-123', email: 'test@example.com', createdAt: Date.now() };

await repo.save(user);

const retrieved = await repo.findById('user-123');

expect(retrieved).toMatchObject(user);

});

});

Yes, this test talks to a database. That's the point.

Layer 3: E2E Tests — Test the System, Not the Pieces

End-to-end tests in serverless are expensive to run and slow to write. I keep this layer thin on purpose.

My rule: one E2E test per critical user journey, not per endpoint.

For a typical API, that might mean:

A user can sign up and receive a confirmation email

A payment can be initiated and the webhook handled correctly

A file upload triggers processing and the result is available

These tests deploy real infrastructure (or use a dedicated staging environment) and exercise the full stack. They're not run on every commit — they run on every PR merge to staging, and before every production deploy.

What I cover here:

Critical paths that involve multiple Lambda functions

Event-driven flows (trigger → process → result)

Anything where the cost of failure is high

The Testing Stack

Here's what I actually use, without the fluff:

Jest — test runner, all layers

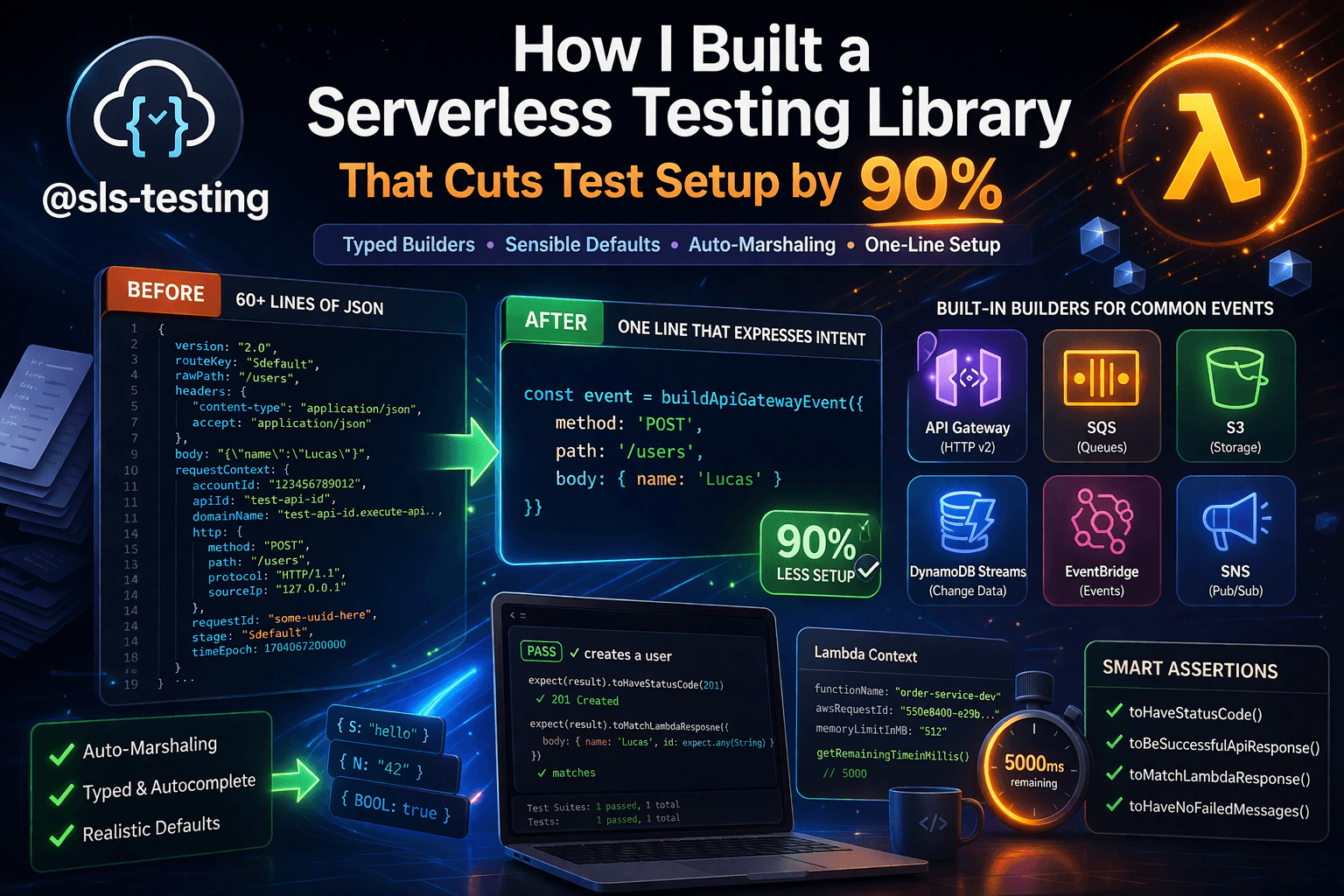

@sls-testing/jest— utilities I built specifically for Lambda testing (event factories, context mocks, assertion helpers)Local emulator — for integration tests in CI (more on this soon)

Real AWS staging — for E2E tests, isolated account or dedicated stage

I'm not going to tell you that you need every tool in this list. Start with Jest and clean unit tests. Add integration tests when you start seeing production bugs that your unit tests didn't catch. Add E2E tests when your system is complex enough to have meaningful user journeys.

Common Mistakes I See (And Made)

1. Mocking the entire AWS SDK

You end up testing that you called putItem with certain parameters. DynamoDB doesn't care about your test — and neither should you. Test the outcome, not the invocation.

2. Testing the framework, not your code

If half your tests are verifying that API Gateway parses the request correctly, you're wasting time. API Gateway already has tests. Yours should cover what happens after the parsing.

3. Coupling tests to infrastructure details

If a test breaks because you renamed a DynamoDB table or changed an environment variable, that's a configuration problem, not a testing problem. Keep infrastructure config out of your test logic.

4. No tests on the async paths

Synchronous request/response flows are easy to test. Async flows — SQS consumers, EventBridge subscribers, Step Functions — are where people give up. Don't. These paths often carry your most critical business logic.

The Bottom Line

My testing strategy in one sentence: unit test the logic, integration test the AWS interactions, E2E test the user journeys — and be ruthless about keeping each layer small and honest.

You don't need 100% coverage. You need the right coverage in the right places.

If this resonated, I'm working on a full ebook on the topic — Testing Serverless Applications (TDD in the Real World) — that goes much deeper on each layer, including contract testing, load testing, and infrastructure testing with CDK. More on that soon.

In the meantime, if you have questions or want to share how you're approaching this, reply to this post or find me on [LinkedIn / X / wherever].

Enjoyed this? The Practical Serverless newsletter covers this kind of stuff every week — no filler, just real serverless patterns from the field.

AI Disclaimer: AI has been utilized to refine the text. The experiences and content are my own.