Lambda isn't always the answer

Yes, this is a serverless blog and obviously the main objective here is to spread the serverless word, and make people feel more comfortable to use it.

But still, we are talking about technology, and to be more precise, about software architecture, and an important part of that is knowing the trade-offs for choosing the right tool for your problem.

Serverless is great, but it doesn't solve all the problems though.

The trade-offs

Let's be real about a few things.

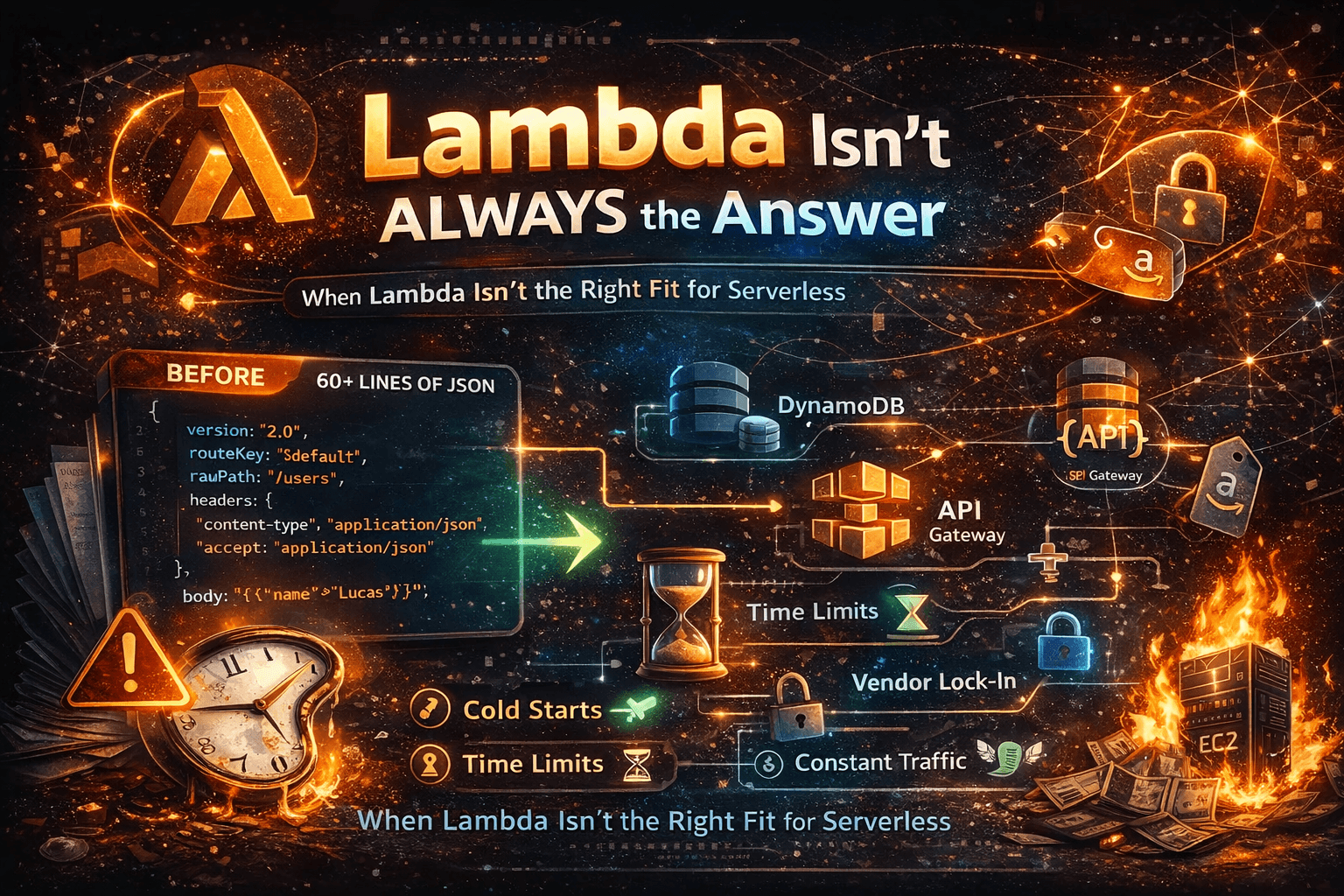

Cold starts hurt in specific situations. If you're building a public-facing API where a small percentage of requests hitting an uninitialized container is acceptable, you'll probably never notice. But if you're building a latency-sensitive application, you will definitely not want to deal with cold starts. It has some workarounds, like Provisioned Concurrency, which solves the problem, but obviously, it adds cost.

Lambdas also have their limitations. You can't run it for over 15 minutes, and the max memory is 10GB, for example.

The observability will be more complicated. With serverless, you're dealing with distributed, short-lived functions that can scale to hundreds of concurrent executions in seconds. Debugging that without proper instrumentation — structured logs, distributed tracing, alerting — is a bad time.

And obviously, there's lock-in. Using managed AWS services is genuinely one of the best parts of going serverless, and also the thing that makes migration expensive if you ever need it. You're making that trade whether you're aware of it or not.

Know these going in. Build with them in mind. Serverless is still worth it — just not blindly.

When Lambda is the wrong choice

Talking about trade-offs is useful, but let's make it concrete. Here are real scenarios where you should think twice before reaching for Lambda.

You need long-running, stateful processes. Video encoding, large data migrations, ML model training — anything that may hit the 15-minute limit or needs to maintain state across steps is a bad fit. You'll end up doing gymnastics with Step Functions to work around Lambda's limits, and at some point, you have to ask: Is this still simpler than just running a container?

Your workload is constant and predictable. Serverless shines when traffic is spiky or unpredictable. If you have a service that runs at steady, high volume 24/7, the pay-per-invocation model stops being cheap. At scale, a reserved EC2 instance or a Fargate service is often more cost-effective. Run the numbers before you commit.

Latency is non-negotiable. Cold starts are manageable in most cases, but if you're building something like a high-frequency trading system or a real-time gaming backend where every millisecond matters, serverless is not your friend. Provisioned Concurrency helps, but it also chips away at the cost model that made serverless attractive in the first place.

Your team is already struggling with observability. Lambda doesn't create observability problems, but it amplifies existing ones. If your team doesn't have solid practices around logging, tracing, and alerting, going serverless will make debugging feel like chasing ghosts. Get the foundation right first.

You're lifting and shifting a monolith. Taking an existing monolithic application and deploying it as a single Lambda function is almost never a good idea. You get the complexity of serverless without any of the benefits. If you're migrating, the work is in decomposing the application, which probably is not worth changing where it runs.

Serverless is more than Lambda

If you've been following the serverless conversation online — or even if you've read my book, From Zero to Production with AWS Lambda — you'd be forgiven for thinking serverless is mostly about Lambda. Lambda gets the blog posts, the talks, the hot takes. But Lambda is just one piece of a much bigger picture.

Serverless is a model, not a service. It's about offloading infrastructure management so you can focus on your business logic. Lambda does that for compute, but the same principle applies across your entire stack.

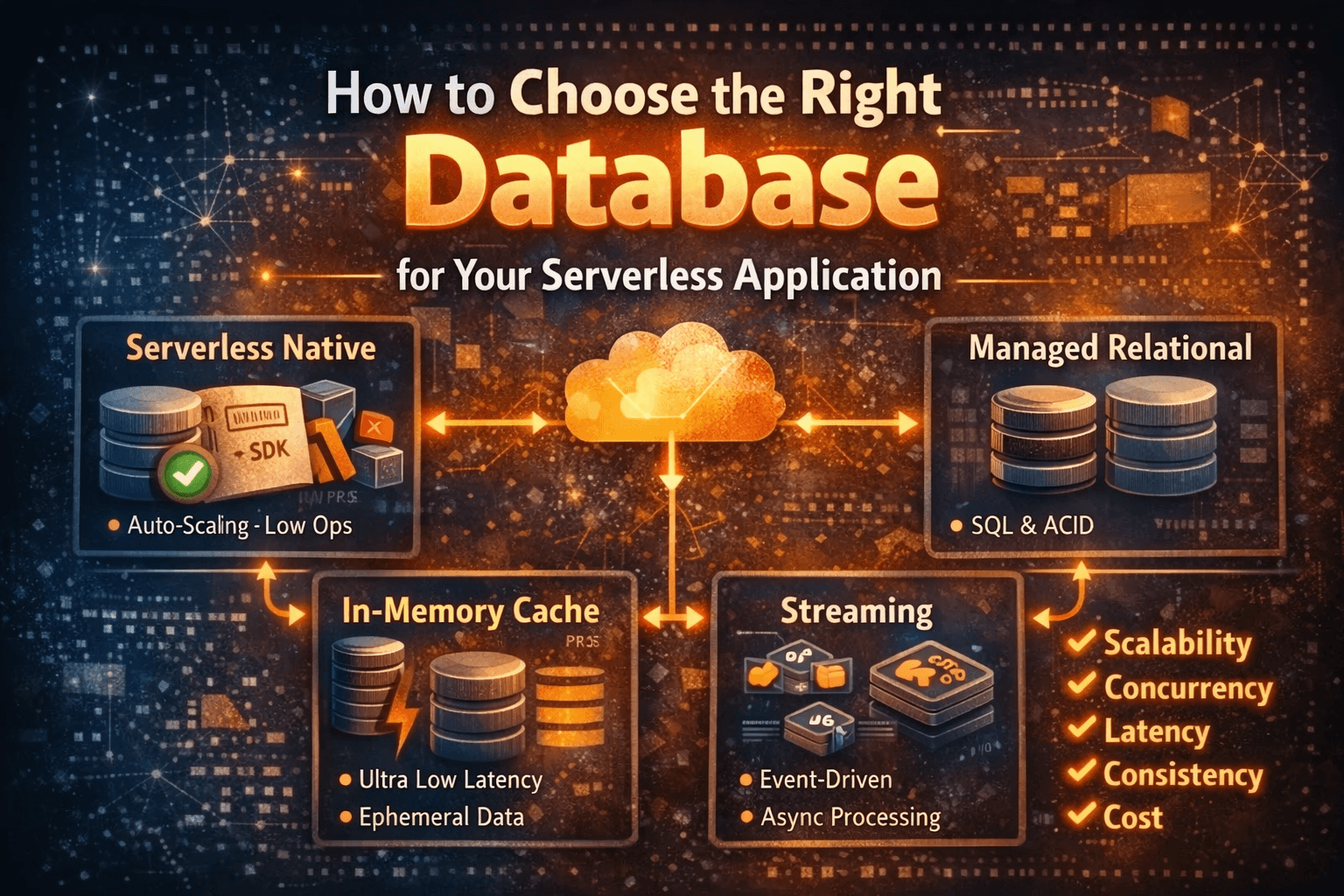

DynamoDB is serverless. You don't manage servers, you don't provision capacity upfront, you just store data and pay for what you use. The same applies to API Gateway, S3, SQS, SNS, and EventBridge — it's all serverless. This can go on and on.

There are serverless options to enhance your stack, and some can even replace Lambdas. For instance, if you have a long-running process or predictable high-volume traffic, Fargate is a great choice. You still avoid managing servers, enjoy automatic scaling, and pay only for what you use. It's serverless, just not Lambda.

When pieces work together, that's when you unlock the real power. A file lands in S3, triggers a Lambda function, which publishes an event to EventBridge, which fans out to multiple SQS queues, each processed by a different Lambda. A heavy processing job gets handed off to Fargate. No servers. No clusters. No ops team babysitting instances at 3 am.

That's the architecture worth understanding. Lambda is the most visible part of it, but building serverless applications means thinking about the whole ecosystem — and knowing which managed service fits each job, Lambda or otherwise.