Your Lambda Was Correct. It Was Also a Disaster.

If you don't know yet, I have a book published about serverless, and this weekend, I got a message from a reader.

He'd been looking at From Zero to Production with AWS Lambda and had a sharp observation: the value wasn't landing fast enough for someone seeing it for the first time. A lot of serverless content already claims to go "beyond Hello World." What made this one actually different?

Fair point. And an honest one.

I gave him an honest answer back: the goal was never "here's how to deploy a function." It was "here's why you'd structure it this way when you have cold starts to worry about, downstream dependencies that can fail, and a team that needs to maintain this six months from now." I wrote the thing I wished existed when I was moving from toy projects to systems that had to survive production traffic, on-call rotations, and Monday morning debugging sessions.

He pushed back — in the best way. He said that the intention is clear when you explain it. But it needs to be visible without explanation. From the first few pages. He wanted to feel the pressure before I offered the structure.

Then he asked: if you had to pick one production issue that best represents the shift from toy to real system, which one would you bring forward first?

I didn't have to think long.

The S3 Loop

You have a Lambda function triggered by S3 uploads. A user uploads a CSV, your function processes it, and saves the result back to the same bucket as a JSON file.

It's a common, reasonable pattern. The code is clean. The function works. The test coverage? 100%.

export const handler = async (event: S3Event): Promise<void> => {

for (const record of event.Records) {

const bucket = record.s3.bucket.name;

const key = record.s3.object.key;

const csv = await s3.getObject({ Bucket: bucket, Key: key }).promise();

const json = transform(csv.Body.toString());

await s3.putObject({

Bucket: bucket,

Key: key.replace('.csv', '.json'),

Body: JSON.stringify(json),

}).promise();

}

};

You deploy it. You run a manual test by uploading a file. It processes the CSV, saves the JSON, and exits cleanly. You move on.

Then you check your logs on Monday morning.

Thousands of invocations. Concurrency at the limit. A bill with a line item you weren't expecting. And the function is still running.

Here's what happened: the function processed the CSV and saved result.json to the bucket. That PUT triggered the Lambda again — because the trigger was watching the entire bucket, not just .csv files. Which ran the function on the JSON. Which wrote something back. Which triggered it again.

The S3 bucket doesn't know or care that the file your Lambda just wrote was the result of processing, not a new file to process. It sees a new object. It fires an event. Lambda picks it up.

No bug. No typo. No oversight in the logic. The function did exactly what it was told, in exactly the environment it was deployed to — and the environment bit back.

Why This Is the Right Story to Tell First

This is almost impossible to catch locally. You're not running the full event pipeline in your dev environment. You're invoking the function directly with a crafted event. Everything looks fine.

The feedback loop only exists in production.

And that's the point. This isn't an edge case. It's not a gotcha for beginners. It's the kind of thing that happens to people who know what they're doing, because the mistake isn't in the code — it's in the mental model.

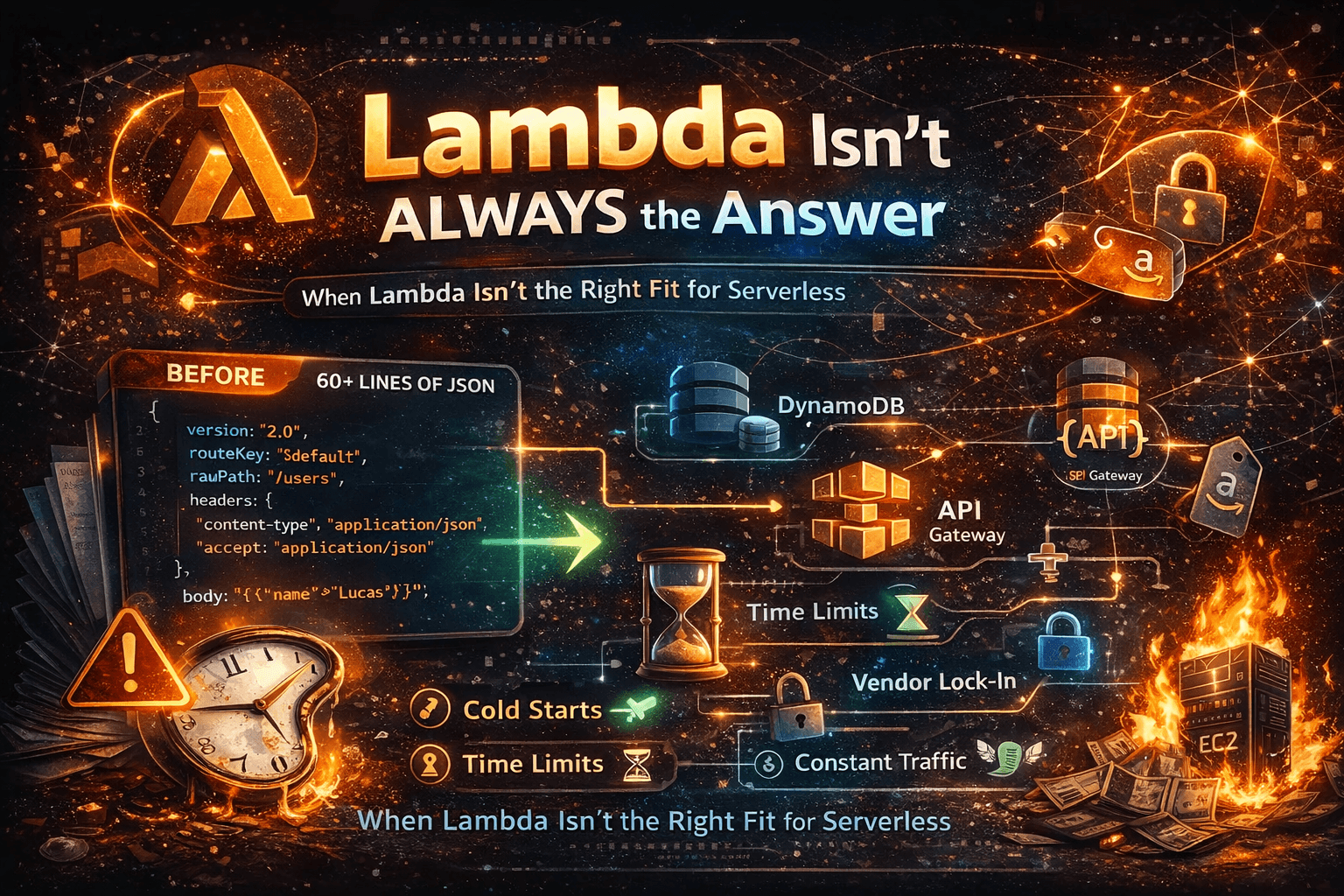

When you're learning Lambda, you think about functions in isolation. Input goes in, output comes out, done. But in production, your function doesn't exist in isolation. It exists inside an event-driven system that reacts to state changes. And state changes can come from anywhere — including from your own function.

The Fix Is Simple. The Lesson Isn't.

For this specific case, the fix takes thirty seconds: use separate buckets for input and output, or filter the trigger by suffix so it only fires on .csv files.

# serverless.yml

functions:

processUpload:

handler: src/handler.handler

events:

- s3:

bucket: uploads-bucket

event: s3:ObjectCreated:*

rules:

- suffix: .csv

Done. Loop broken.

But if you stop at "use a suffix filter," you've fixed the incident without absorbing the lesson.

The lesson is this: your code being correct isn't enough. It also needs to behave well in the environment it actually runs in.

Every trigger you configure, every service you write to, every queue you publish to — these are all potential feedback paths. If you don't think about them deliberately, the system will eventually find one you didn't mean to create.

The Questions I Now Ask Before Every Deploy

After enough of these moments, I've trained myself to slow down before shipping any new Lambda and ask four things:

What happens if this function fires twice on the same input? Idempotency isn't optional in event-driven systems. Retries happen. Duplicates happen. At-least-once delivery is the default, not the exception. If your function isn't safe to run twice, it will eventually run twice.

What does this function write to, and could that trigger something? Trace every write: S3 puts, DynamoDB streams, SQS sends, EventBridge publishes. For each one — what's listening? Could that listener, directly or indirectly, trigger this function again?

What happens at scale? A function that behaves fine at 10 concurrent invocations can behave very differently at 500. Downstream services start throttling. Timeouts compound. Retries amplify the load rather than absorbing it. Think through the failure modes before traffic does it for you.

What would I be looking at on a Monday morning incident? If this goes wrong at 2am, what does it look like in CloudWatch? How do I know something is wrong before my bill tells me? Logs and alarms aren't an afterthought — they're part of the feature.

Correctness Is Table Stakes

The bar for production isn't "does it work." It's "does it behave well when the environment doesn't cooperate."

That's what I tried to answer for my reader. It's also what I try to establish early in everything I write and build. Not "here's how Lambda works." But: here's what happens on a Monday morning when something goes wrong — and here's how you build systems that survive it.

Fix the mental model, and you stop making a whole class of mistakes at once.

This is the kind of thing I go deep on in From Zero to Production with AWS Lambda — no fluff, written for developers who are done with toy examples. And if you want more of this every week, you're already in the right place.

Disclosure: This post was edited with AI assistance for clarity and flow—my experiences, analysis, and conclusions remain my own.